干货:PHP与大数据开发实践

大数据是使用工具和技术处理大量和复杂数据集合的术语。能够处理大量数据的技术称为MapReduce。

何时使用MapReduce

MapReduce特别适合涉及大量数据的问题。它通过将工作分成更小的块,然后可以被多个系统处理。由于MapReduce将一个问题分片并行工作,与传统系统相比,解决方案会更快。

大概有如下场景会应用到MapReduce:

1 计数和统计

2 整理

3 过滤

4 排序

Apache Hadoop

在本文中,我们将使用Apache Hadoop。

开发MapReduce解决方案,推荐使用Hadoop,它已经是事实上的标准,同时也是开源免费的软件。

另外在Amazon,Google和Microsoft等云提供商租用或搭建Hadoop集群。

还有其他多个优点:

可扩展:可以轻松清加新的处理节点,而无需更改一行代码

成本效益:不需要任何专门和奇特的硬件,因为软件在正常的硬件都运行正常

灵活:无模式。可以处理任何数据结构 ,甚至可以组合多个数据源,而不会有很多问题。

容错:如果有节点出现问题,其它节点可以接收它的工作,整个集群继续处理。

另外,Hadoop容器还是支持一种称为“流”的应用程序,它为用户提供了选择用于开发映射器和还原器脚本语言的自由度。

本文中我们将使用PHP做为主开发语言。

Hadoop安装

Apache Hadoop的安装配置超出了本文范围。您可以根据自己的平台,在线轻松找到很多文章。为了保持简单,我们只讨论大数据相关的事。

映射器(Mapper)

映射器的任务是将输入转换成一系列的键值对。比如在字计数器的情况下,输入是一系列的行。我们按单词将它们分开,把它们变成键值对(如key:word,value:1),看起来像这样:

the 1

water 1

on 1

on 1

water 1

on 1

... 1

然后,这些对然后被发送到reducer以进行下一步骤。

reducer

reducer的任务是检索(排序)对,迭代并转换为所需输出。 在单词计数器的例子中,取单词数(值),并将它们相加得到一个单词(键)及其最终计数。如下:

water 2

the 1

on 3

mapping和reducing的整个过程看起来有点像这样,请看下列之图表:

使用PHP做单词计数器

我们将从MapReduce世界的“Hello World”的例子开始,那就是一个简单的单词计数器的实现。 我们将需要一些数据来处理。我们用已经公开的书Moby Dick来做实验。

执行以下命令下载这本书:

wget http://www.gutenberg.org/cache ... 1.txt

在HDFS(Hadoop分布式文件系统)中创建一个工作目录

hadoop dfs -mkdir wordcount

我们的PHP代码从mapper开始

#!/usr/bin/php

<?php

// iterate through lines

while($line = fgets(STDIN)){

// remove leading and trailing

$line = ltrim($line);

$line = rtrim($line);

// split the line in words

$words = preg_split('/\s/', $line, -1, PREG_SPLIT_NO_EMPTY);

// iterate through words

foreach( $words as $key ) {

// print word (key) to standard output

// the output will be used in the

// reduce (reducer.php) step

// word (key) tab-delimited wordcount (1)

printf("%s\t%d\n", $key, 1);

}

}

?>

下面是 reducer 代码。

#!/usr/bin/php

<?php

$last_key = NULL;

$running_total = 0;

// iterate through lines

while($line = fgets(STDIN)) {

// remove leading and trailing

$line = ltrim($line);

$line = rtrim($line);

// split line into key and count

list($key,$count) = explode("\t", $line);

// this if else structure works because

// hadoop sorts the mapper output by it keys

// before sending it to the reducer

// if the last key retrieved is the same

// as the current key that have been received

if ($last_key === $key) {

// increase running total of the key

$running_total += $count;

} else {

if ($last_key != NULL)

// output previous key and its running total

printf("%s\t%d\n", $last_key, $running_total);

// reset last key and running total

// by assigning the new key and its value

$last_key = $key;

$running_total = $count;

}

}

?>

你可以通过使用某些命令和管道的组合来在本地轻松测试脚本。

head -n1000 pg2701.txt | ./mapper.php | sort | ./reducer.php我们在Apache Hadoop集群上运行它:

hadoop jar /usr/hadoop/2.5.1/libexec/lib/hadoop-streaming-2.5.1.jar \

-mapper "./mapper.php"

-reducer "./reducer.php"

-input "hello/mobydick.txt"

-output "hello/result"

输出将存储在文件夹hello / result中,可以通过执行以下命令查看

hdfs dfs -cat hello/result/part-00000

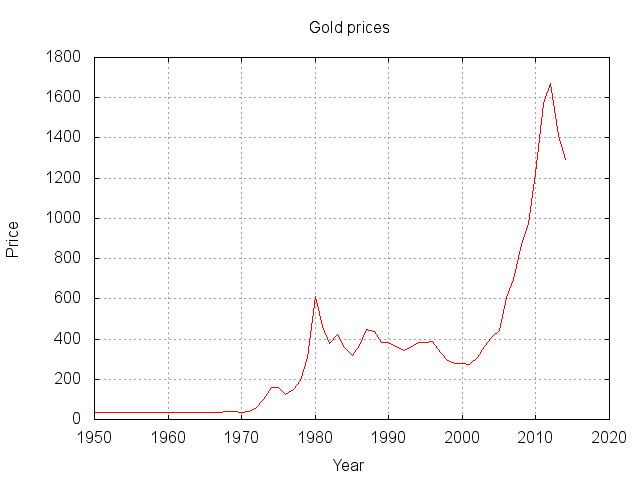

计算年均黄金价格

下一个例子是一个更实际的例子,虽然数据集相对较小,但是相同的逻辑可以很容易地应用于具有数百个数据点的集合上。 我们将尝试计算过去五十年的黄金年平均价格。

我们下载数据集:

wget https://raw.githubusercontent. ... a.csv

在HDFS(Hadoop分布式文件系统)中创建一个工作目录

hadoop dfs -mkdir goldprice

将已下载的数据集复制到HDFS

hadoop dfs -copyFromLocal ./data.csv goldprice/data.csv

我的reducer看起来像这样

#!/usr/bin/php

<?php

// iterate through lines

while($line = fgets(STDIN)){

// remove leading and trailing

$line = ltrim($line);

$line = rtrim($line);

// regular expression to capture year and gold value

preg_match("/^(.*?)\-(?:.*),(.*)$/", $line, $matches);

if ($matches) {

// key: year, value: gold price

printf("%s\t%.3f\n", $matches[1], $matches[2]);

}

}

?>

reducer也略有修改,因为我们需要计算项目数量和平均值。

#!/usr/bin/php

<?php

$last_key = NULL;

$running_total = 0;

$running_average = 0;

$number_of_items = 0;

// iterate through lines

while($line = fgets(STDIN)) {

// remove leading and trailing

$line = ltrim($line);

$line = rtrim($line);

// split line into key and count

list($key,$count) = explode("\t", $line);

// if the last key retrieved is the same

// as the current key that have been received

if ($last_key === $key) {

// increase number of items

$number_of_items++;

// increase running total of the key

$running_total += $count;

// (re)calculate average for that key

$running_average = $running_total / $number_of_items;

} else {

if ($last_key != NULL)

// output previous key and its running average

printf("%s\t%.4f\n", $last_key, $running_average);

// reset key, running total, running average

// and number of items

$last_key = $key;

$number_of_items = 1;

$running_total = $count;

$running_average = $count;

}

}

if ($last_key != NULL)

// output previous key and its running average

printf("%s\t%.3f\n", $last_key, $running_average);

?>

像单词统计样例一样,我们也可以在本地测试

head -n1000 data.csv | ./mapper.php | sort | ./reducer.php

最终在hadoop集群上运行它

hadoop jar /usr/hadoop/2.5.1/libexec/lib/hadoop-streaming-2.5.1.jar \查看平均值

-mapper "./mapper.php"

-reducer "./reducer.php"

-input "goldprice/data.csv"

-output "goldprice/result"

hdfs dfs -cat goldprice/result/part-00000

小奖励:生成图表

我们经常会将结果转换成图表。 对于这个演示,我将使用gnuplot,你可以使用其它任何有趣的东西。

首先在本地返回结果:

hdfs dfs -get goldprice/result/part-00000 gold.dat创建一个gnu plot配置文件(gold.plot)并复制以下内容

# Gnuplot script file for generating gold prices生成图表:

set terminal png

set output "chart.jpg"

set style data lines

set nokey

set grid

set title "Gold prices"

set xlabel "Year"

set ylabel "Price"

plot "gold.dat"

gnuplot gold.plot这会生成一个名为chart.jpg的文件。看起来像这样:

译者:杜江

作者:Glenn De Backer

原文:https://www.simplicity.be/article/big-data-php/

本篇文章为 @ 21CTO 创作并授权 21CTO 发布,未经许可,请勿转载。

内容授权事宜请您联系 webmaster@21cto.com或关注 21CTO 微信公众号。

该文观点仅代表作者本人,21CTO 平台仅提供信息存储空间服务。

评论

21CTO

管理团队最新文章

我要赞赏作者

请扫描二维码,使用微信支付哦。